Should Social Media Platforms Be Held Responsible for Misinformation? The Truth Behind Accountability

Introduction

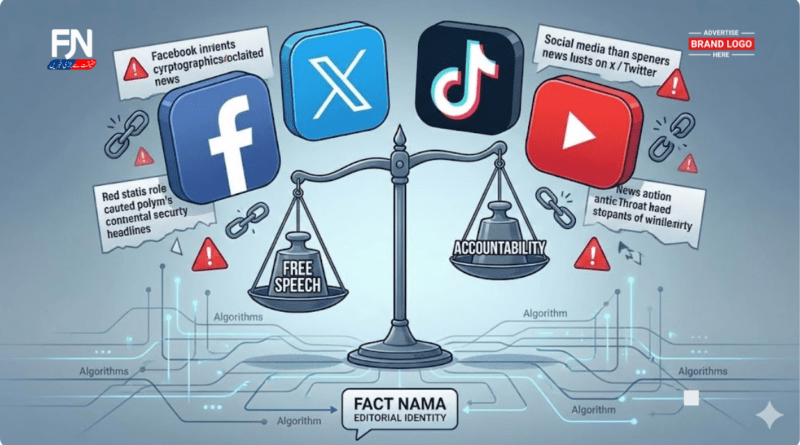

Social media platforms have transformed how people consume news, debate politics, and form opinions. For millions of Americans, platforms like Facebook, X (formerly Twitter), TikTok, and YouTube are now primary sources of information. But with this influence comes a pressing question: should social media platforms be held responsible for misinformation shared on their networks?

From election falsehoods to public health myths, misinformation has proven capable of shaping real-world behavior, undermining trust, and even causing harm. Critics argue platforms profit from engagement while avoiding accountability. Defenders warn that holding platforms responsible could threaten free speech and innovation.

This debate sits at the intersection of law, ethics, technology, and democracy. Understanding it requires more than headlines—it requires examining how platforms operate, what the law allows, and what responsibility looks like in a digital age.

What Is Misinformation on Social Media?

Misinformation refers to false or misleading information shared without the intent to deceive. On social media, it can include:

-

Incorrect news reports

-

Misleading headlines

-

False health advice

-

Edited images or videos

-

Outdated or decontextualized content

Unlike disinformation, misinformation often spreads unintentionally—but its impact can be just as damaging, especially when amplified by algorithms designed to reward engagement.

Why Misinformation Spreads So Easily Online

Algorithm-Driven Amplification

Social media platforms prioritize content that triggers emotional reactions—anger, fear, or outrage—because it keeps users engaged. Unfortunately, misinformation often performs well under these conditions.

Speed Over Accuracy

Traditional journalism relies on verification. Social media favors speed. False claims can reach millions before corrections appear.

Low Barriers to Publishing

Anyone can post content without editorial oversight, making platforms vulnerable to inaccuracies.

Confirmation Bias

Users tend to engage with content that reinforces existing beliefs, allowing misinformation to circulate within echo chambers.

The Case for Holding Social Media Platforms Responsible

1. Platforms Shape Information Flow

Social media companies are not neutral bulletin boards. Their recommendation systems actively decide what users see, when they see it, and how often.

2. Public Harm Is Documented

Research from institutions like Pew Research Center shows widespread concern among Americans that false information online is harming democracy and public trust.

3. Profit Incentives Exist

Platforms generate revenue from ads placed next to viral content—accurate or not. Critics argue that benefiting financially creates ethical responsibility.

4. Precedent in Other Industries

Television, radio, and print media are subject to regulations and standards. Supporters argue social media should not be exempt simply because it is digital.

The Case Against Platform Liability

Free Speech Concerns

Critics warn that forcing platforms to police content aggressively could lead to over-censorship and suppression of legitimate speech.

Scale and Feasibility

Billions of posts are published daily. Fact-checking every piece of content is practically impossible.

Legal Protections

In the United States, Section 230 of the Communications Decency Act limits platform liability for user-generated content, allowing the internet to function at scale.

Risk of Government Overreach

Some fear government-mandated moderation could politicize truth and erode civil liberties.

Section 230: Why the Law Matters

Section 230 is often described as the law that “created the modern internet.” It generally protects platforms from being treated as publishers of user content while allowing them to moderate in good faith.

However, critics argue the law was written before:

-

Algorithmic feeds

-

Influencer economies

-

AI-driven recommendations

As platforms evolved from passive hosts to active curators, calls for reform have grown louder.

Are Platforms Responsible for Algorithms?

One of the most critical questions is not whether platforms should be responsible for what users say, but whether they should be accountable for how content is promoted.

Algorithms:

-

Decide which posts go viral

-

Can repeatedly recommend harmful misinformation

-

Are designed by platforms, not users

This distinction has fueled legal and ethical debates about whether responsibility lies in design choices, not speech itself.

Mental Health, Safety, and Misinformation

Misinformation is not only a political issue—it is a public health and safety concern.

Examples include:

-

False medical advice

-

Harmful online challenges

-

Conspiracy theories targeting vulnerable communities

Studies from Common Sense Media and other organizations link exposure to harmful online content with increased anxiety, misinformation acceptance, and reduced trust in institutions.

What Responsibility Could Look Like (Without Censorship)

Accountability does not necessarily mean deleting every false post.

Possible Approaches:

-

Slowing the spread of unverified content

-

Labeling disputed claims

-

Reducing algorithmic amplification of known falsehoods

-

Transparency about recommendation systems

-

Supporting independent research access

These measures focus on risk reduction, not speech suppression.

Common Myths About Platform Responsibility

Myth 1: Responsibility Equals Censorship

Reality: Transparency and friction are not censorship.

Myth 2: Users Alone Are to Blame

Reality: Platforms shape visibility and engagement.

Myth 3: Fact-Checking Is Impossible

Reality: While perfection is impossible, mitigation is achievable.

Expert Perspectives

Media scholars and legal experts increasingly agree that the debate is no longer binary.

Rather than asking whether platforms should be responsible, many ask:

-

Responsible for what?

-

Responsible how?

-

Responsible to whom?

The emerging consensus suggests a shared responsibility model involving platforms, users, educators, and policymakers.

The Role of Media Literacy

Accountability does not end with platforms. Users must also develop the skills to evaluate information critically.

This is where media literacy and critical media literacy become essential—helping people question sources, motives, and framing before sharing content.

Actionable Steps for Platforms and Users

For Platforms:

-

Increase transparency

-

Invest in safety-by-design

-

Collaborate with independent researchers

For Users:

-

Verify before sharing

-

Follow trusted news sources

-

Learn basic fact-checking skills

-

Pause before reacting emotionally

FAQs

Should social media platforms be held responsible for user content?

Most experts argue platforms should not be liable for individual posts but should be accountable for harmful system-level design choices.

Why should social media companies take responsibility?

Because their algorithms influence public discourse and real-world behavior at scale.

Should platforms be responsible for mental health impacts?

There is growing agreement that platforms should consider foreseeable harms when designing engagement systems.

Should platforms be held responsible for cyberbullying?

Many believe platforms should take stronger preventive action without criminalizing users.

What is the 5-5-5 rule on social media?

A digital wellness concept encouraging balanced screen time and mindful consumption.

Conclusion

So, should social media platforms be held responsible for misinformation? The answer is not absolute—but accountability is unavoidable.

Platforms are no longer passive hosts. They are powerful information gatekeepers shaping how society understands reality. While free speech must be protected, ignoring the role platforms play in amplifying misinformation risks long-term damage to public trust, health, and democracy.

A balanced approach—one that respects rights while addressing harm—is essential. Responsibility does not mean control of truth, but care in design, transparency, and ethical governance.

For deeper insight, explore related Fact Nama explainers on media literacy importance, critical media literacy, and the difference between misinformation, disinformation, and malinformation.

Read More (Fact Nama)

🌐 Sources

-

Pew Research Center

-

Common Sense Media

-

Reuters

-

BBC

Hello http://factnamas.com,

We can place your website on Google 1st page. I can give you our Complete SEO Action Plan along with a customary reach and add great value to your product/ service.

I may send you a SEO Packages & price list. If interested.

Best Regards,

Sonam

Hi Justin,

Thank you for reaching out and for your interest in contributing to Fact Nama.

Yes, we do accept guest posts on factnamas.com, provided the content aligns with our editorial standards and audience. All submissions are reviewed by our editorial team to ensure they are original, high-quality, and relevant to our readership.

Please note that we offer both editorial (free, selective) and paid guest post options, depending on the topic, niche, and promotional intent. If you could share more details about:

Your proposed topic(s)

Target audience

Whether the post is informational or promotional

we’ll be happy to advise on suitability and any applicable fee.

At this time, Fact Nama is our primary publishing platform. Should additional partner sites become available, we’ll be glad to discuss those opportunities as well.

Looking forward to your details.

Best regards,

Shahzad

Founder & Editor

Fact Nama

🌍 https://factnamas.com